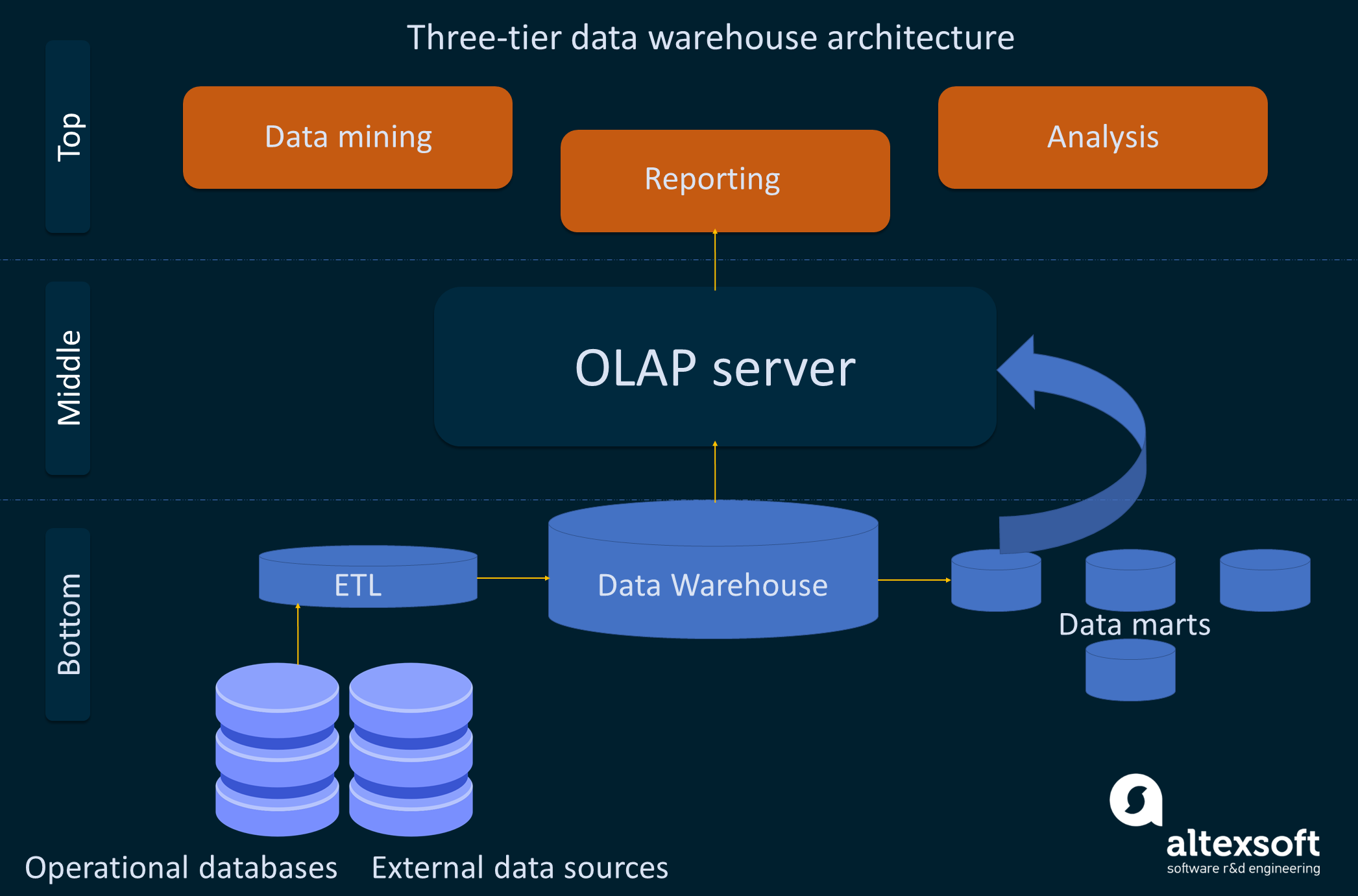

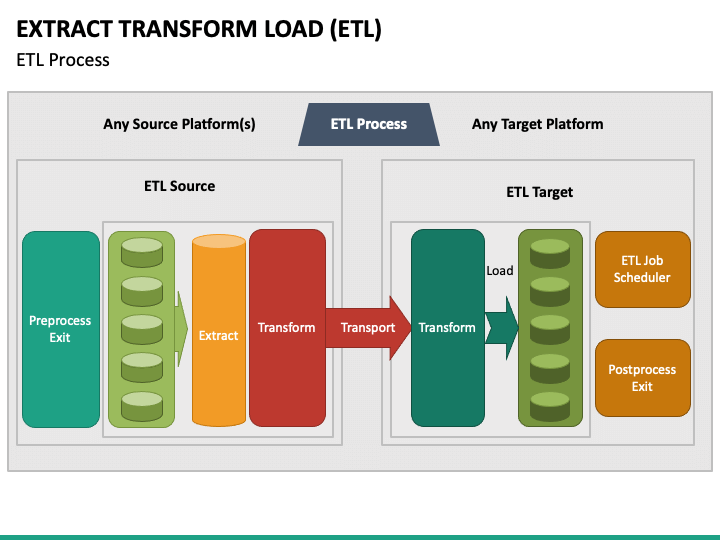

Since the data extraction takes time, it is common to execute the three phases in pipeline. Ī properly designed ETL system extracts data from the source systems, enforces data quality and consistency standards, conforms data so that separate sources can be used together, and finally delivers data in a presentation-ready format so that application developers can build applications and end users can make decisions. ĭata extraction involves extracting data from homogeneous or heterogeneous sources data transformation processes data by data cleaning and transforming them into a proper storage format/structure for the purposes of querying and analysis finally, data loading describes the insertion of data into the final target database such as an operational data store, a data mart, data lake or a data warehouse.

The ETL process became a popular concept in the 1970s and is often used in data warehousing.

In computing, extract, transform, load ( ETL) is the general procedure of copying data from one or more sources into a destination system which represents the data differently from the source(s) or in a different context than the source(s).

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed